OSC Trackers

VRChat now offers support for receiving tracker data over OSC for use with our existing calibrated full body IK system.

Please note: This is an advanced feature! It is NOT plug-and-play. You must create your own program to transmit this data to VRChat using OSC.

Manufacturers of tracking hardware may provide an application that will send this data for you. You can also use a community-created program to utilize your full-body tracking setup.

OSC Addresses

/tracking/trackers/1/position

/tracking/trackers/1/rotation

/tracking/trackers/2/position

/tracking/trackers/2/rotation

/tracking/trackers/3/position

/tracking/trackers/3/rotation

/tracking/trackers/4/position

/tracking/trackers/4/rotation

/tracking/trackers/5/position

/tracking/trackers/5/rotation

/tracking/trackers/6/position

/tracking/trackers/6/rotation

/tracking/trackers/7/position

/tracking/trackers/7/rotation

/tracking/trackers/8/position

/tracking/trackers/8/rotation

/tracking/trackers/head/position

/tracking/trackers/head/rotationEach address accepts Vector3 information in the form of 3 floats (X,Y,Z). These should be the world-space positions and euler angles of your OSC trackers.

Currently up to 8 trackers are supported: hip, chest, 2x feet, 2x knees, 2x elbows (upper arms).

It's not always best to send all 8!You may get better behavior sending only feet and hips for example. When fewer trackers are sent, VRChat's IK can better compensate for any tracking discrepancy. When your tracking data has high accuracy in absolute position and rotation (no drift) then it becomes advisable to start sending more tracking points.

The "head" addresses can optionally be sent to aid in aligning your sender app's tracking space with VRChat's tracking space (see below).

Tracking Space

Assumptions:

- In general, we are using Unity's coordinate system

- +y is up

- Scaled such that 1.0f = 1m in real-world space. Your sending app will likely need to have the user input their real world height to accommodate this

- Left-handed coordinate system

- Euler angles are in degrees and will be applied in order of Z, X, Y when used internally to produce a quaternion

In principle, this should function similarly to our existing implementation for SteamVR trackers. Due to the challenges of arbitrary tracking data coming in, we've provided new functionality to aid in alignment.

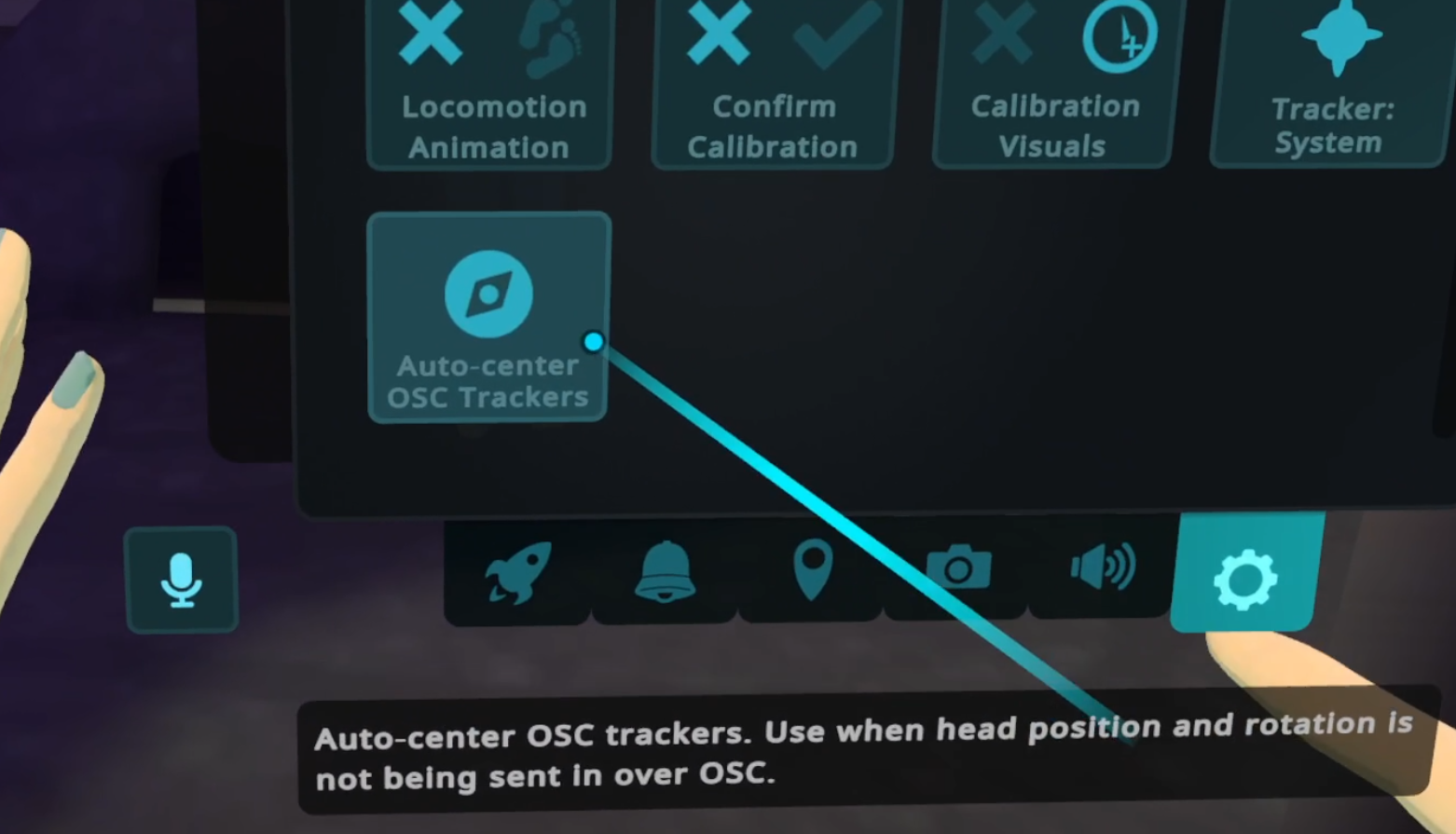

Auto-center OSC Trackers

WarningThis section is out of date.

This is a new button available in Tracking & IK section of the VRC Quick Menu's "Gear" tab.

This button will find the two lowest trackers on the Y axis, and center their mid-point under the user's current head position in VRChat.

Additionally, it will guess a forward direction based on assuming the two lowest trackers represent left and right feet.

There is no way to determine front vs back from this alone, so clicking the Auto-center OSC Trackers button repeatedly will alternate the forward direction.

Receiving Head Data

/tracking/trackers/head/position

/tracking/trackers/head/rotationTracking data sent in to VRChat at the above addresses can be used as an alignment reference between your sending app and VRChat. The entire OSC tracking space will be shifted such that /tracking/trackers/head/position aligns with the avatar's head bone position (note this is at the root of the head, not the eye position). This position will be fully aligned per frame, with no interpolation/smoothing.

Data sent to /tracking/trackers/head/rotation will be used for yaw alignment. It is assumed that euler angles (0,0,0) represent a neutral forward-looking direction. VRChat's tracking space yaw alignment will slowly lerp towards the rotation provided.

If only a single OSC message is sent for head rotation (rather than continuous streaming of data) the tracking space yaw will perform a one-time instant alignment rather than lerping. This may be useful if the sending app doesn't have reliable head sensor data, but can assume the user is facing straight forward during the sender app's own calibration processes for example. The threshold for determining if the OSC message was "single" is 300ms. If a second head rotation message arrives within 300ms, it will be considered streaming data and the normal 10 second data timeout and lerping behavior will be active.

For the head data, it is possible to send just position, just rotation, both, or neither. When available, the head data will be used for the corresponding alignment. If unavailable, that alignment will not occur. For example, position-only without yaw alignment is possible if only the position is sent.

Tracker Models

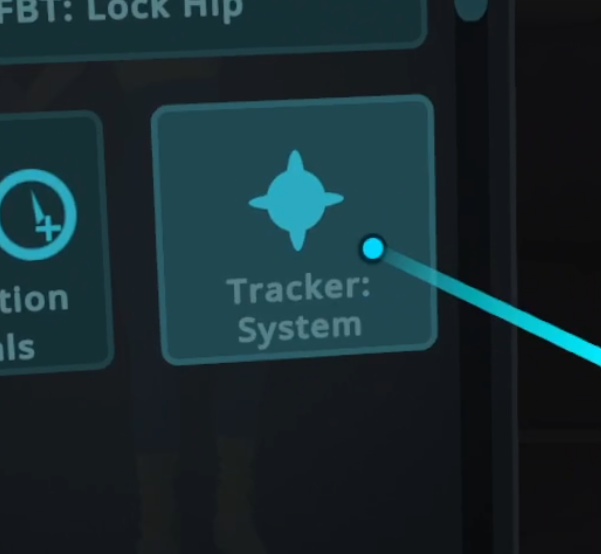

When using the tracker display model setting in the Tracking & IK section of the VRC Quickmenu, if you set the model to "Tracker: System" the models will never disappear even after calibration. This can aid in debugging.

Example Code

You can see an example script here that could be used to send tracker data from a Unity project. This example script assumes that you use an avatar in the project that would have pose data applied to it via other means. Virtual tracker transforms would then be parented to appropriate bones on the project's avatar and slotted into the example script. This script shouldn't just be used as-is however. For example the pose-avatar's height is hard-coded and must be set appropriately. Please read through the code and use it for educational purposes. This is not intended as a fully functional solution, but rather a starting point to get you sending OSC Trackers data from a Unity project. Using virtual trackers on a pose-avatar isn't required, nor is using Unity for that matter. This is just one example of a way to send OSC Trackers data into to VRChat.

Updated 6 months ago